One of the benefits of moving to Kubernetes is that your applications can run in a highly scalable environment. You can add additional pods quickly if you suddenly need more capacity, and then get rid of them when you don’t need them anymore. But what if you are running a stateful application? When you terminate a container, everything within it is destroyed as the resources are released.

To provide support for stateful applications, Kubernetes has something called volumes. This article will explain the technical details of how to use and manage volumes within your Kubernetes deployment. We’ll also discuss what you should consider when planning and executing a migration to a Kubernetes environment.

Interested in more information on Kubernetes in production deployment? Watch the webinar series offering key insights on moving Kubernetes into production from Platform9 and key partners.

The Story of Containers and Persistent Storage

The first adopters of Kubernetes used it to deploy stateless applications. As the platform’s popularity grew, groups began to explore different ways to deploy and leverage its power. Many organizations deploy Kubernetes clusters to their on-premise data-centers, public and private cloud providers, and/or hybrid environments. It has also become popular in edge computing because deploying a light-weight application at the edge allows providers to capture the cloud’s convenience and improve performance by moving the processing closer to the consumer.

As the various ways of deploying Kubernetes have increased, so has the need to persist data between calls. Determining how to combine ephemeral containers with persistent storage is a complex problem, but various solutions have been proposed, tested, and refined, despite several challenges.

One such challenge is the fact that the requirements of persistent data stores vary based on the application. Some storage is meant for long-term use, which means that it can be slower, but some storage needs a performant response time, and some require a high degree of resiliency. Therefore, Kubernetes requires a generic solution to allow each of these storage types to be accessible without introducing a level of complexity that would make it unworkable.

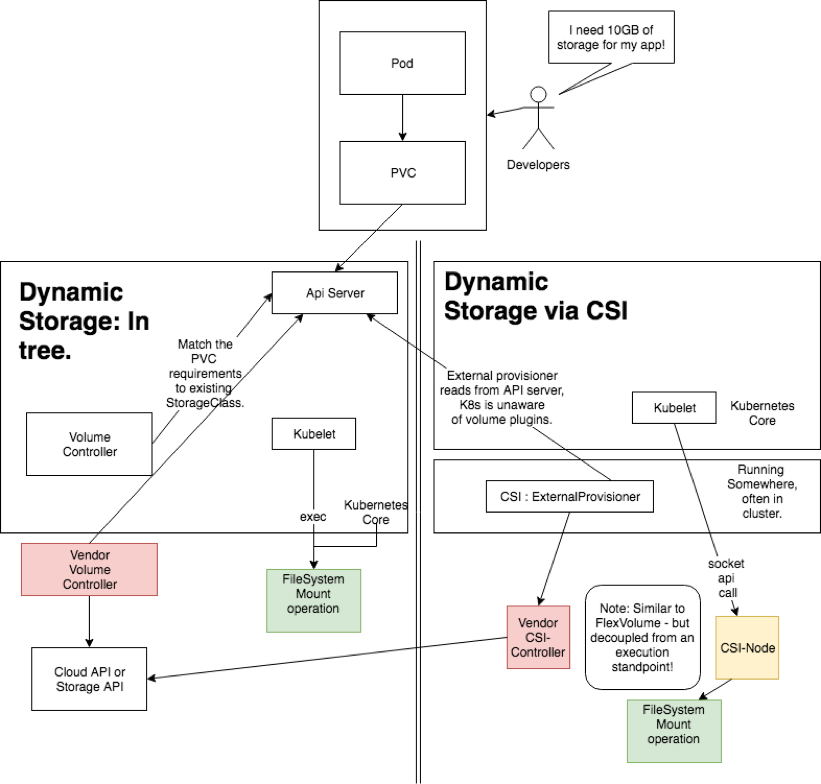

At the core of the persistent storage solution is the concept of PersistentVolumes (PV) and PersistentVolumeClaims (PVC). A PV defines a unit of storage within the cluster. It is an API object with configurable characteristics, not unlike the rest of the Kubernetes ecosystem. The PVC is a request for access to a defined class of storage. Cluster administrators define PVs, which are then available as static resources within the cluster. If a PVC requests a resource type that isn’t available, the cluster may dynamically provision a persistent volume to meet the need.

More recently, Kubernetes added the Container Storage Interface (CSI), which builds on the PV/PCS model. CSI is a plugin that adds the ability to connect your cluster with block and file storage systems. It’s an extensible interface that can connect Kubernetes cluster resources and storage resources. Using CSI, Kubernetes can access AWS EFS, AWS EBS, Azure Disk, Azure File, IBM Block storage, and other similar resources. One good example of a CSI-based resource is Portworx, which you can access here. Below, we will discuss the features that Portworx provides to illustrate best practices for managing and monitoring your storage.

Want to get deeper in storage? Read – Kubernetes Storage: Dynamic Volumes and the Container Storage Interface

Managing and Monitoring Storage

The characteristics of a best-in-class storage provider will be similar, no matter where your storage resides. Native cloud storage is no different. Your storage solution needs to be available when your application needs it, to be able to transfer data with the speed necessary for your application and to secure potential threats from inside and outside your organization. Let’s explore how to meet these needs.

When we talk about availability, we’re talking about having storage resources ready when we need them – and highly available storage is available even if some of the infrastructures that support it fail. We’re also talking about data persistence and disaster recovery, along with backup processes and procedures that allow you to recover from more extensive outages and disasters.

Transfer speed is closely related to scalability. As an application is scaled up, so is the quantity of data that it produces and consumes. If your storage cannot scale alongside your application, you’ll quickly discover that this will prevent you from reaching your full potential and providing the highest quality user experience.

The security of your data is perhaps the most important thing – but security is a double-edged sword. You want authorized users and resources to be able to efficiently access the resources they need while simultaneously preventing unauthorized access. Identity management systems can be incredibly complex, and they need to strike a balance between being granular enough to secure each component of an extensive system, and being straightforward enough to be easy to manage and implement.

Portworx addresses each of these three areas. They provide access to a wide array of storage resources based on your application’s needs and its tolerance (or lack thereof). Their infrastructure and tools are cloud-native and designed to scale alongside your applications, and their tools can be integrated with your corporate identity management system, which simplifies security administration and control.

Finally, Portworx enables you to monitor how your storage resources are performing in real-time. Comprehensive metrics and analytics help you identify trends, validate performance, and identify problems before they become large enough to affect your systems.

Secrets for a Successful Migration

If you are looking to move to Kubernetes, you’re going to have many questions. As an open-source project, Kubernetes has a wide array of information available in its documentation as well as from other people who have learned by experimenting and making mistakes. You could blaze your own path forward using this wealth of information, but your frustration might be as extensive as your knowledge of Kubernetes by the time you’re finished.

What you really need is a migration strategy that leverages wider talent and expertise, which will increase your chances of success and free your team to focus on providing value to your customers. Thankfully, you can combine Platform9’s managed Kubernetes platform with storage solutions from their official partner, Portworx. Together, these providers have already done the hard work – saving you time, resources, and peace of mind.

Next Steps

If you would like an in-depth look at how Platform9 and Portworx interact, check out the blog, Solving the Kubernetes and Storage Challenge by Platform9’s Head of Solutions Engineering, Peter Fray, and Chief Strategist, Vamsi Chemitiganti. You can also learn more by visiting the Portworx website to explore their Kubernetes storage solutions.