Recently, we set out to answer an age-old Kubernetes mystery – “what’s different about my cluster’s profile RBAC”. Let’s say you are managing 3 environments for many developer teams. The promotion lifecycle goes “Development”, “Staging”, then “Production”. The Development environment is an internal, safe place for developers to develop. It’s not highly available or has super-sophisticated observability. Developers have enough permission to change access policies, so things can get out of sync with higher environments. Every once in a while you sync policies, to bring the developers back to earth :).

Staging is where applications and services get promoted when they are ready for prime time. This environment is a replica of production. Access is very limited (it’s only accessible internally) and the policies need to match production exactly. Successful testing in staging means the app is ready for production. Even the slightest difference in policies could mean unhappy customers. So you can’t let things get out of sync.

Finally the golden environment, production. The one you tip-toe through and whisper about, so as to not wake the giants. This environment is always on. It’s where all apps and services are consumed. It’s where your customers are either very happy or very sad (there never seems to be a middle ground). You must get this one right.

The desired state of these environments is to have the exact same RBAC policies. This can be quite a tall order, so at least have a goal of understanding what the differences are and collaborating on those differences with others.

Kubernetes has a very powerful API that is happy to serve you all this information. So why would you need a 3rd party for these comparisons? All you need is a really amazing script to crawl each cluster (even production) at a moment’s notice, and the confidence of not (mistakenly) affecting anything. Are you up for that? Why not take this debt off your shoulders and let someone else hold the burden. I am sure your time is better spent working on things that grow the business.

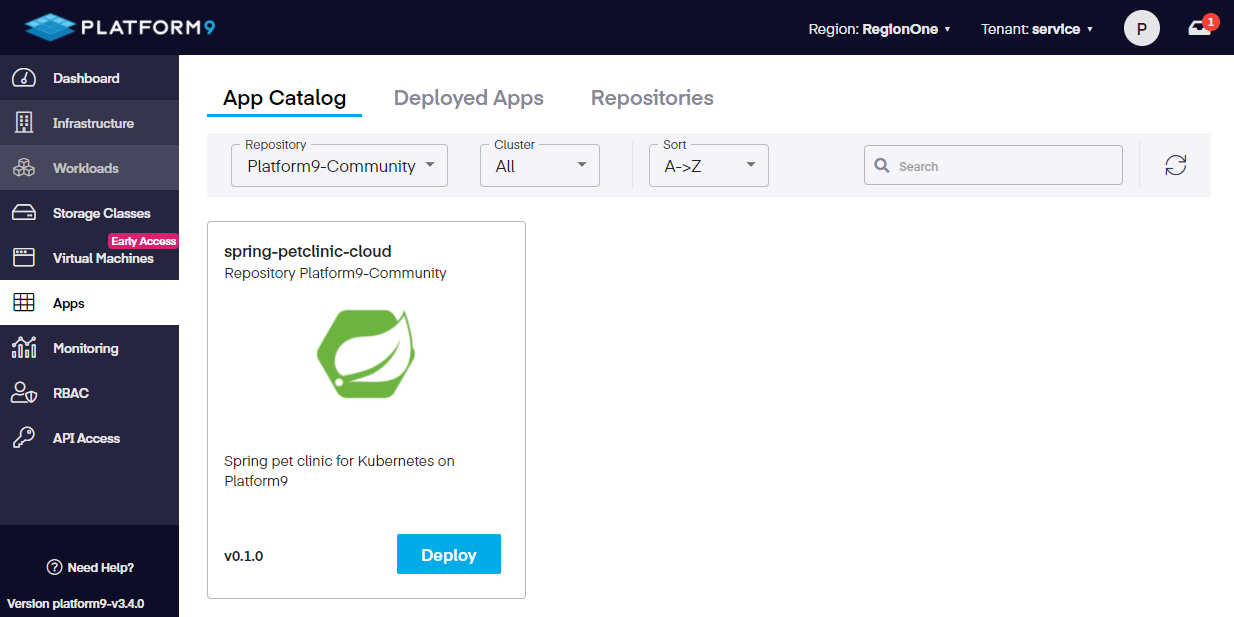

Would you rather click a button, get a report, and choose exactly when and where to make changes? Yes, so would I. Let take a look at the profiles engine and the new cluster profiles feature of Platform9 Managed Kubernetes.

Creating a cluster profile

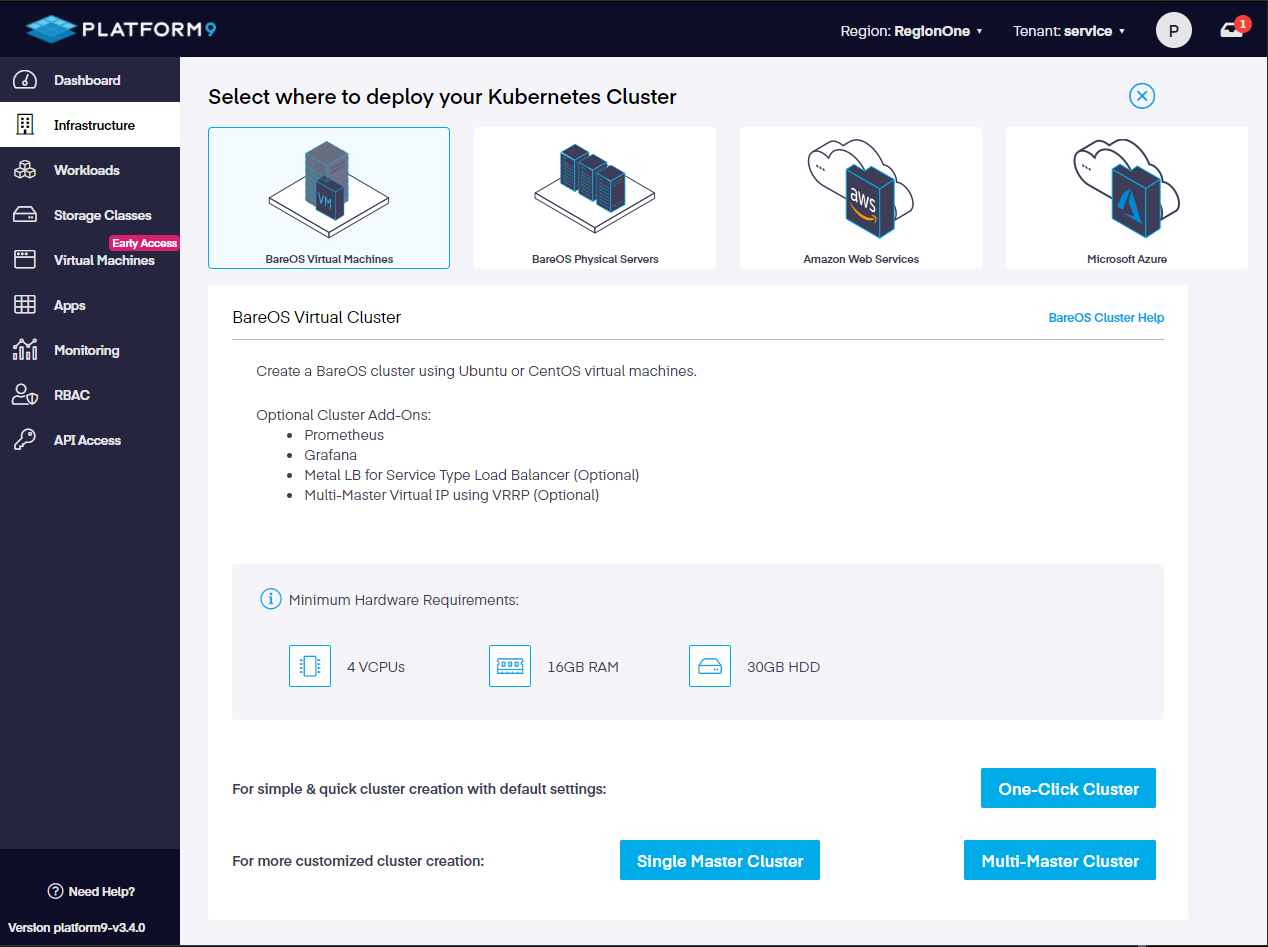

To compare cluster RBAC, you’ll need a profile. It’s your golden image. It’s what all other clusters hope to be one day… it’s most likely based on production. To create a profile, you simply tell PMK which cluster should be used to create a profile and follow the wizard.

You’ll be given the opportunity to choose which object in each namespace should be a part of the profile. This includes roles, cluster roles, role bindings, and cluster role bindings. It’s a lot to take in if there are multiple namespaces, so an easier way is to select all for each object type :). After confirming your choices the profile is created.

The profile goes through a few stages. The first is “creating”. This means… it’s being created. Then the profile will be a “draft”. This means the initial profile has been created, but it’s not quite ready for comparisons. Finally, it will be “ready”. This means the profile is ready to be deployed or compared against other clusters.

Changing a cluster profile

While creating the profile did you notice there was never a chance to augment or add any roles? Once created, then you are given the option to “edit”. You can add and change things all the way to the verb. The wizard provides complete granularity for every single role.

After the profile is bound to clusters you can still edit and apply, but it’s a good idea to treat them as immutable things. Editing creates history and a git repo is a right place for that kind of stuff. Refer to the automating section below for more details.

Applying the profile to a cluster

When the profile has all the desired bells and whistles, it’s time to “publish”. This is the big moment.

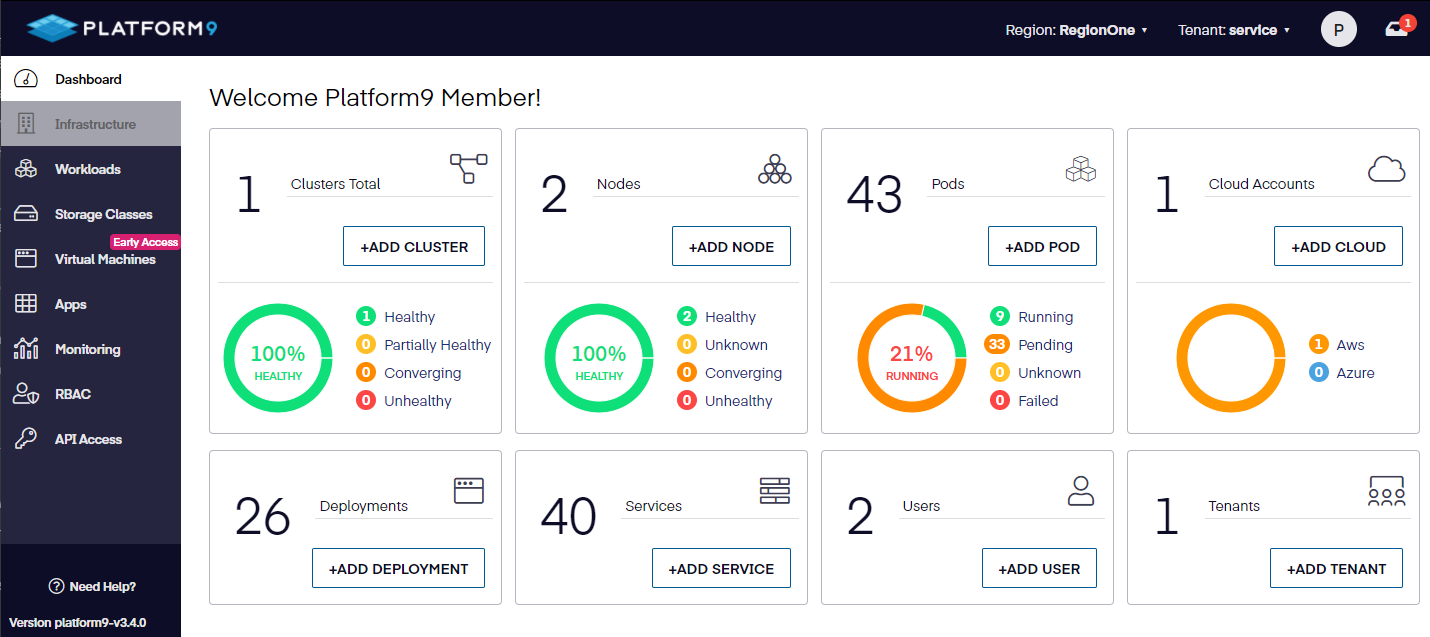

But! before we can do that, there are a few prerequisites for a cluster to qualify as bindable to a profile. First, it needs to be at least on Kubernetes 1.21. With PMK this is a snap; just go to the “Infrastructure” area and look at the “Version” column. If it’s not the latest supported version, you will see an “upgrade” link. That will guide you through updating the cluster in a structured, safe way.

With the minimum version met, the cluster will also need the profile agent installed. This is even easier than upgrading a cluster. You will be given a link right in the cluster list to “install”. Behind the scenes, the cluster will get a new operator that knows all about profile engine magic. Users can read more about the profile engine using this link.

Now it’s time for the big moment! Choose a cluster you want to apply this RBAC profile to and… wait do you know what is going to happen when this is applied? What will this impact? You have the option to run an “impact analysis” ahead of applying the profile. Impact analysis does a read of the target cluster’s roles and compares that against the intended profile to publish. It gives you the ability to drill down all the way to the verb and see exactly what the differences are.

With the understanding of the impact, you now have the option to “deploy”. When the profile is applied to the cluster it is done in a non-destructive way. Meaning, each role in the profile will be created in the target cluster but non-matching roles (and verbs) in the cluster will be unchanged.

Analyzing cluster RBAC drift

So far we’ve been comparing a profile and cluster from the profile’s perspective. Answering the question “how is this profile different from a cluster”. The profile engine has an alternate point of view called “Drift Analytics”. This perspective answers the question “what will the cluster look like after the profile is applied?”. It’s a useful way of reporting on an intended change but not going the full “deploy” route.

The comparison provided by Drift Analytics is very detailed, similar to the Impact Analysis. But, unlike the Impact Analysis, you do not have the option to deploy the result of an analysis. Drift Analysis is a read-only report that is only available at the time of analysis. Meaning, if you want others to review the report (or save it for further work) you can “Export” as JSON.

We recommend saving this file in a git repository (similar to the cluster profile itself). You can then collaborate with others, have a full history of changes, and “Import” the analysis report back into the UI for convenient visualization.

Automating analysis

Our UI offers great features for comparing a profile to a cluster as well as importing/exporting drift analysis for detailed differences. Some of these actions can also be automated on a recurring basis. Remember our environments? You need to know when a role difference between them occurs and what the impact will be if a profile was applied. You may also want to archive profile definitions every so often as a backup.

There is a sub-feature of our Qbert API called sunpike. When the profiles engine was installed on the cluster (see above), the operator added several custom resource definitions (CRD) to the cluster. These CRDs add new endpoints to the K8s API for accomplishing everything related to cluster profiles.

Getting started

To get started using the profile engine, visit our learning site and choose the infrastructure that best suits your needs. If you want to learn more about how things work, read the docs. All in all, the new cluster profiles feature is an advancement you will not find on other platforms.