In this blog post we are going to go over a few examples of how to create ingress resources that utilize Cert-Manager and the Nginx Ingress Controller on Platform9 Managed Kubernetes. There are two pieces of video content that go over the same configuration. Feel free to watch the videos and then reference this blog for the examples, or if you prefer text based explanations then read on and we’ll go over the setup.

Account Setup

To get started with Cert-Manager and Nginx we first need a working Kubernetes Cluster using Platform9 Managed Kubernetes, or any working Kubernetes cluster (yam examples below.)

Once we have configured and deployed a Kubernetes cluster, we need to add a helm App Catalog Repository. We’re going to use the Certified Apps repository from Platform9 so that we can deploy Cert-Manager and the Nginx Ingress Controller.

https://platform9.com/docs/kubernetes/certified-apps-overview

After the App Catalog has been updated we can go ahead and deploy Cert-Manager. Name the deployment cert-manager, select the latest version and Default namespace, then update the email address in the configuration options. Add an email address that can be used to receive notifications from Letsencrypt. After updating the email address we can select deploy.

Next up we need to deploy the Nginx Ingress Controller. We’re going to use the option “With-LB” so that we can make use of the LoadBalancer service in our AWS Kubernetes deployment. Set a name and select the latest version, select the Default namespace, and then hit deploy. After the deployment finishes we will need to find the hostname used for the ELB. To do this we can run:

kubectl get serviceThe output will give us a hostname that we can use to set up a CNAME record for our domain. Update your domain’s DNS for the subdomain that you want to use for this example. In our example we use the subdomain “demo” and point it to UUID.us-west-2.elb.amazonaws.com. This will send all traffic for demo.DOMAIN.com to our LoadBalancer. Ingress resources will be used to direct the traffic once it has been sent into our cluster.

We are going to go over two different examples. The first example will create a single deployment and service and will send traffic to “/” on our domain. The second example will add a second service and deployment and will split traffic based on the endpoint.

The examples can be used on any cluster that has the LoadBalancer service with a Public IP. The beginning of the guide goes over how to install using AWS however if you install on BareOS and have a public IP address that is being used for your LoadBalancer (more than likely using MetalLB) then you would only need to modify the DNS records you configure for your domain. If you are using an IP address instead of a hostname then you would create an A record instead of a CNAME record.

Cert-Manager and Nginx Ingress Examples

Single Deployment and Service

Deployment

Save the deployment as hello.yaml, then create the deployment with:

kubectl create -f hello.yamlapiVersion: apps/v1

kind: Deployment

metadata:

name: hello-world

labels:

app: hello-world

spec:

replicas: 1

selector:

matchLabels:

app: hello-world

template:

metadata:

labels:

app: hello-world

spec:

containers:

- name: hello-world

image: k8s.gcr.io/echoserver:1.4

ports:

- containerPort: 8080Service

Save the service as hello-service.yaml, then create the service with:

kubectl create -f hello-service.yamlapiVersion: v1

kind: Service

metadata:

name: hello-world

spec:

ports:

- port: 8080

selector:

app: hello-worldIngress

Our Ingress Resource is set up to send traffic for demo.DOMAIN.com/ to our hello-world service. We are utilizing an annotation to let Cert-Manager know that we also want a certificate for our URL by specifying the cluster-issuer “cert-manager”: cert-manager.io/cluster-issuer: "cert-manager". Cert-Manager is watching for new Ingress resources that are created and will take action when we specify the annotation and that we want TLS. The certificate is pulling a name from our secretName: demo-key.

Save the ingress resource as hello-ingress.yaml, then create the ingress resource with:

kubectl create -f hello-ingress.yamlapiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: hello-world

namespace: default

annotations:

cert-manager.io/cluster-issuer: "cert-manager"

spec:

tls:

- hosts:

- demo.DOMAIN.com

secretName: demo-key

rules:

- host: "demo.DOMAIN.com"

http:

paths:

- pathType: Prefix

path: "/"

backend:

service:

name: hello-world

port:

number: 8080

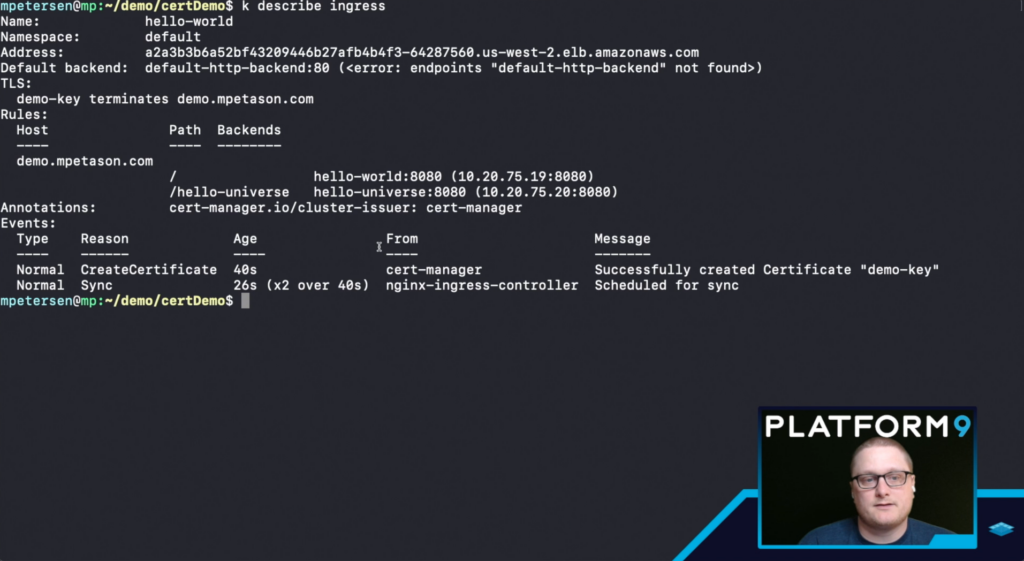

Now that we have deployed the first example we need to wait for the certificate to be provisioned. We can see the status by running:

kubectl get certificateWe are waiting for the certificate to end up in a Ready status. If you are not seeing Ready within a few minutes then run kubectl describe certificate (assuming there is only one Certificate this should give you details about it. If there is more than one then run kubectl describe certificate CERT-NAME instead.) One common issue that causes certificates to fail is DNS resolution. There needs to be enough time between setting up the DNS record and creating the certificate, for the record to propagate and resolve. With a lower TTL this shouldn’t take too long.

We can also get information about the Ingress Resource by running kubectl get ingress, if there is more than one Ingress Resource we can run kubectl get ingress INGRESSNAME to see details about the status.

Once everything has been created and is in a ready status (including the certificate) we can open the page in a browser. The URL will be demo.DOMAIN.com/ – which should redirect to https and now have a valid certificate. The output will show you information about the endpoint as we’re running the k8s.gcr.io/echoserver:1.4 container.

Multiple Deployments and Services

Deployment #2

Save the second deployment as hello-universe.yaml, then create the deployment with:

kubectl create -f hello-universe.yamlapiVersion: apps/v1

kind: Deployment

metadata:

name: hello-universe

labels:

app: hello-universe

spec:

replicas: 1

selector:

matchLabels:

app: hello-universe

template:

metadata:

labels:

app: hello-universe

spec:

containers:

- name: hello-universe

image: mpetason/hello-universe

ports:

- containerPort: 8080Service #2

Save the second service as hello-universe-service.yaml, then create the service with:

kubectl create -f hello-universe-serviceapiVersion: v1

kind: Service

metadata:

name: hello-universe

spec:

ports:

- port: 8080

selector:

app: hello-universeIngress

Save the Ingress Resource as hello-ingress.yaml and either delete the first ingress resource, or apply this resource over it. To delete kubectl delete ingress hello-world, then kubectl create -f hello-ingress.yaml. If you want to apply the updates instead of deleting:

kubectl apply -f hello-ingress.yamlIn this resource we have specified a second path of /hello-universe which will pick up traffic for demo.DOMAIN.com/hello-universe and send it to our hello-universe service, which will end up resolving to our hello-universe pod.

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: hello-world

namespace: default

annotations:

cert-manager.io/cluster-issuer: "cert-manager"

spec:

tls:

- hosts:

- demo.DOMAIN.com

secretName: demo-key

rules:

- host: "demo.DOMAIN.com"

http:

paths:

- pathType: Prefix

path: "/"

backend:

service:

name: hello-world

port:

number: 8080

- pathType: Prefix

path: /hello-universe

backend:

service:

name: hello-universe

port:

number: 8080

Once we have deployed everything and have updated the Ingress resource we should be able to repeat the step above by browsing to demo.DOMAIN.com/ and seeing the same information. In addition to the path for “/” we have created a path for “/hello-universe” which allows us to also browse to demo.DOMAIN.com/hello-universe. This should resolve to a site with a valid certificate and will output “hello universe” in the browser.

Conclusion

In the examples given we were able to use the same LoadBalancer for multiple paths. This allows us to utilize a single LoadBalancer resource with Ingress Resources to reduce spend on public endpoints for each service. In addition to Ingress we were also able to set up valid certificates for our endpoints by adding a single annotation to our Ingress Resource. Cert-Manager monitored our Ingress Resources for the correct annotation and automated provisioning a valid LetsEncrypt certificate for our endpoint.

If you ran into any issues please reach out to us on Slack (join slack) or check out one of the videos to follow along.