In my recent post on InfoQ, I discuss the techniques that serverless platforms use “under the hood” to balance performance and cost.

In my recent post on InfoQ, I discuss the techniques that serverless platforms use “under the hood” to balance performance and cost.

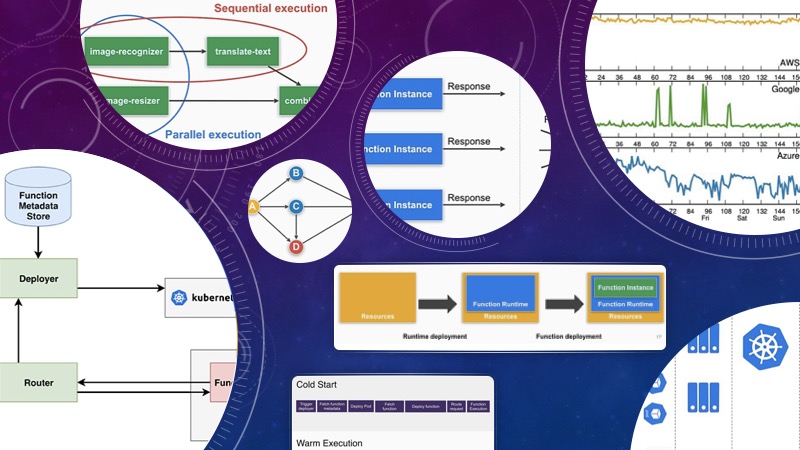

Serverless computing is for many the logical next step in cloud computing, moving applications to a set of higher-level abstractions and offloading more of the low-level operational work to the cloud provider (regardless of whether that is a public one or an internal infrastructure team). It promises reliable performance on-demand while directly linking the pricing to the resources used.

Being active as both a researcher and software engineer in the serverless computing domain, my aim is to give you an idea of what is going on under the covers of the current state-of-the-art serverless platforms—especially with regards to performance and its impact on cost, and how you can influence these tradeoffs.

Read the full article on InfoQ to learn:

- The cost and performance models are two of the key drivers of the popularity of serverless and Function-as-a-Service (FaaS).

- Cold starts have gone down a lot, from multiple seconds to 100s of milliseconds, but there is still much space for improvement.

- There are various techniques that are being used to improve the performance of serverless functions, most of which focus on reducing or avoiding cold starts.

- These optimizations are not free; it is a trade-off between performance and cost, which depends on the requirements of your application.

- Currently, closed-source serverless services offered by public clouds offer few options for users to influence these trade-offs. Open-source FaaS frameworks that can run anywhere (such as Fission) offer full flexibility to tweak these performance/cost tradeoffs.

- Serverless computing is not just about paying for the resources that you use; it is about only paying for the performance you actually need.