OpenStack Storage Options: Tutorial and Use Cases

This OpenStack storage options tutorial reviews different storage options available in OpenStack, and the use cases for each. This will serve as a building block for follow up tutorials that dive deeper into OpenStack Cinder features. Unless explicitly noted as a Platform9-specific feature, the concepts discussed are applicable to any OpenStack storage implementation.

OpenStack Storage Options – At A Glance

There are currently four OpenStack storage options available: ephemeral storage, block storage (OpenStack Cinder), file storage (OpenStack Manila) and object storage (OpenStack Swift). The table below outlines the differences between the four OpenStack storage options:

| Ephemeral Storage | Block Storage | File Storage | Object Storage | |

| Used to… | Run Operating System and scratch space | Store persistent data on devices accessed as hard drives | Store persistent data in file shares | Store large amounts of persistent data requiring concurrent access |

| Accessed through… | A file system | A block device | A file system | REST API |

| Accessible… | With a VM | With a VM | With a VM | Anywhere |

| Managed by… | OpenStack Compute (Nova) | OpenStack block storage (Cinder) | OpenStack file storage (Manila) | OpenStack object storage (Swift) |

| Persists until… | VM is deleted | Deleted by user | Deleted by user | Deleted by user |

| Sizing determined by… | Administrator configures size settings, known as flavors | Specified by user in initial request | Specified by user in initial request | Amount of available physical storage |

| Example of typical usage… | 10 GB first disk, 30 GB second disk | 1 TB Disk | 10 TB file share | 10s of TBs of dataset storage |

| Advantages/Disadvantages | Pros:

Cons:

| Pros:

|

Ephemeral Disks

This is the default OpenStack storage option used for creating VMs. Virtual machine instances are typically created with at least one ephemeral disk which is used to run the VM guest operating system and boot partition.

Ephemeral Disks With KVM

An ephemeral disk is purged and deleted when an instance on KVM is itself deleted by the cloud user or admin. Ephemeral disks are often used with cloud native application use cases where VMs are expected to be short-lived and the data does not need to persist beyond the life of a given VM. This may include VMs with data that is cached for short usage, VMs hosting applications that replicate its data across multiple VMs, or persistent data is saved on Cinder or Swift storage.

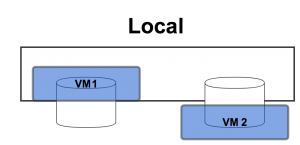

Ephemeral disks are typically created using the local/direct-attached storage of the compute/hypervisor node. The storage can be disks in the host server enclosure or in external closures accessed via a cable. In either case, the storage can be only be accessed by the compute node running on that host server and the VMs it hosts.

OpenStack storage option: Ephemeral Disk with local storage

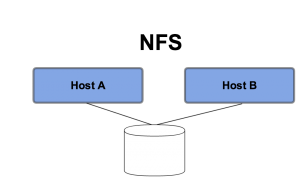

Another option for Ephemeral storage is to host them on external storage via an NFS mount. In this scenario, the data is still purged when the VM using that the virtual disk on the file share is deleted. However, using an NFS mount for the VM root disk does allow for simpler VM migration since multiple compute nodes can be given access to the same file share. This method also allows for recovery of VMs when a hypervisor crashes or dies, as long as the NFS mount is accessible to atleast one other hypervisor node.

An Administrator makes ephemeral storage available to OpenStack by allocating either local storage on a hypervisor or NFS shared storage volume mounted on a hypervisor as the default storage location for virtual machine instances. This is typically done while adding a new hypervisor to OpenStack. When adding new hosts to Platform9 Managed OpenStack, the default location for ephemeral storage is/opt/pf9/data/instances/ which you can configure at the time of adding the host.

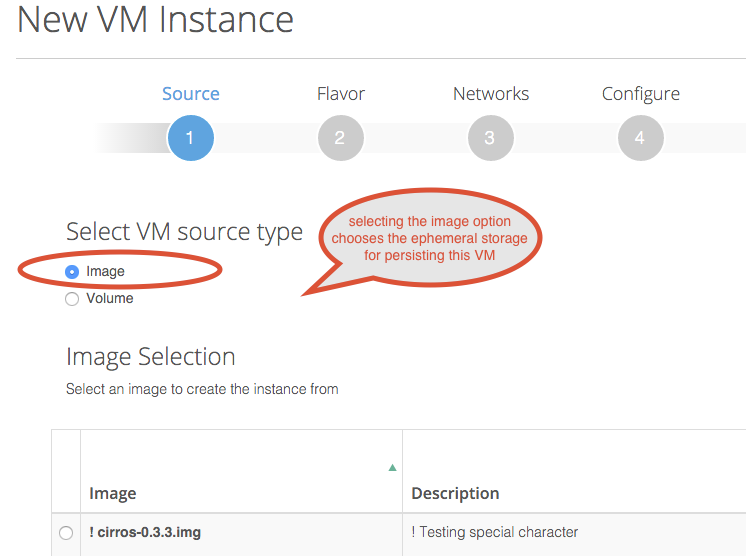

When you create a Virtual machine instance using OpenStack, unless you explicitly choose a Cinder volume to create your VM from, the default storage used is ephemeral storage.

During VM creation, once the OpenStack placement engine find the appropriate hypervisor to place the VM on, the image file is copied over to the local storage of the hypervisor where it’s cached for future use. A VM is then created usually by spawning a delta disk from the image’s base disk.

Ephemeral Disks With VMware vSphere

In case of OpenStack on top of VMware vSphere, ephemeral disks are backed by vSphere Datastores. Administrators configure one or more vSphere Datastores and Clusters as resources that OpenStack can use, and then Virtual Machines created using OpenStack are persisted on these Datastores. Due to the requirement for shared storage when using vSphere features such as HA and vMotion, the vSphere Datastores are typically not created on local storage but some type of shared storage backend that can be accessed by multiple ESXi hosts.

VMware vSphere supports VMs running on NFS using vSphere Datastores. Live migration of VMs are supported using VMware’s vMotion technology, accessed via vCenter. Other shared storage options include Fibre Channel and iSCSI.

Cinder Block Storage

Cinder enables persistent block storage volumes, and is one of the most important and popular OpenStack storage options. In early OpenStack releases, block storage was implemented as a component of Nova Compute, called Nova Volumes; which has since been refactored out as its own project called Cinder. Cinder provides cloud instances with block storage volumes that persist even when the instances they are attached to are deleted.

Note that Cinder is NOT a shared storage technology though the storage backend is typically a shared storage device. A single Cinder block volume can only be attached to a single instance at any one time but provides the flexibility to be detached from one instance and attached to another. An analogy to this is of a USB drive which can be attached to one machine and moved to a second machine with any data on that drive staying intact across multiple machines. As such, Cinder does not preclude the need to move data volumes when doing a VM migration.

Cinder based persistent block storage has several potential use cases in contrast to other OpenStack storage options such as ephemeral storage or object storage.

Advantages of Cinder Block Storage:

- If for some reason you need to delete and relaunch an instance, you can keep any “non-disposable” data on Cinder volumes and re-attached them to the new instance.

- If a compute node crashes, you can launch new instances on surviving compute nodes and attach Cinder volumes to those new instances with the data intact.

- You can extend a Cinder volume if you need to grow the capacity of a disk being used by a cloud instance.

- Using a dedicated storage node or storage subsystem to host Cinder volumes, capacity can be provided that is greater than what is available via the direct-attached storage in the compute nodes (Note that is also true if using NFS with shared storage but without data persistence).

- Using an enterprise storage solution to host Cinder volumes so specific vendor features such as thin-provisioning, tiering, Quality of Service, etc. can be leveraged.

- A Cinder volume can be used as the boot disk for a Cloud instance; in that scenario, an ephemeral disk is not required.

A common question when implementing OpenStack is: how to choose between the two most common OpenStack storage options: the default Ephemeral storage vs persistent Cinder storage. The table below summarizes some of these use cases and can guide you in choosing Ephemeral vs Cinder for OpenStack storage:

| Use Case | Ephemeral Storage | Cinder Storage |

| Recovering from a failed hypervisor | Instance can be recreated on another hypervisor node but data is inaccessible if ephemeral disks are on local disk. | Recreate instance on another hypervisor node and attach to Cinder volume to access data. |

| Recovering after inadvertently deleting an instance | Data is lost and must be recovered from backup. | Recreate instance and attach to Cinder volume to access data. |

| Adding capacity | Only expandable if on NFS. If on local disk, capacity limited by capacity of hypervisor node. | If using server local disks, capacity limited by capacity of cinder node. If on array, capacity can be added up to limit of array. |

| Applications with high performance needs | Better for applications with low latency storage requirements | May be better for applications with high storage IO requirements since you can stripe across more disks. |

| Application requiring QoS | Applications must be statically attached to different storage tiers. No QoS when dealing with resource contention. | Resources can be assigned dynamically if supported by storage backend. |

| Boot volume | Faster boot time if on local disk but boot disks are not persistent if instances are deleted. | Slower boot time due to latency but boot disks are persistent. |

Manila File Storage

Manila is the newest OpenStack storage project and it is designed to simplify the management of file shares for use by VMs. Manila currently supports both NFS and CIFS so file share-as-a-service can be available for both Linux and Windows guests.

As indicated above, ephemeral storage can also access an NFS file share. The difference in the ephemeral use case is that the file share is mounted by the compute node and not by the VM; the VM accesses capacity running on the mounted file system as ephemeral virtual disks. With Manila, VMs access file shares directly and the data stored on those shares persists across VM deletions.

Manila is ideal for unstructured data storage where the durability and overhead of object storage is not required. It is also ideal for use cases where multiple VMs need concurrent access via a file system mount point.

Note that Manila is not currently supported by Platform9.

Openstack Swift Tutorial (Object Storage)

Swift was included as one of the original OpenStack projects to provide durable, scale-out object storage. Unlike a typical file system where metadata for a file is hosted in a table, Swift stores an object’s metatdata with the object itself. This allows a large quantity of objects to be stored in a single namespace since there is no master table that has to be interrogated every time an object is accessed. This makes Swift ideal storage for large quantities of unstructured data, such as images and small files.

Swift is also designed to be durable and accomplishes this by mirroring all data across multiple physical disks. By default, Swift maintains three copies of all objects that it stores. Not only does this make Swift highly available, but it also makes Swift ideal for use cases where concurrent access is required. The tradeoff, however, is that there is a 67% overhead on storage capacity.

Unlike a typical file share, Swift objects are not accessed by mounting a file system to a VM. Instead objects are accessed and manipulated using REST APIs. This makes it idea for applications that need programmatic access to storage. Examples of applications that are ideal for object storage would be an online file sharing program or an expense system that needs to store receipt images.

Note that Swift is not currently supported by Platform9.

Refer to OpenStack Storage Tutorial: Cinder Block Storage for a deep dive into Cinder block storage.